The Complex Relationship Between -id and -or

The English language, notorious for its pervasive irregularities and apparent inconsistencies, includes a trend that, when applied to certain word forms, leads to some curious results. The trend to which I refer is the relationship of adjectives ending in –id to corresponding nouns ending in –or. This trend is seen exclusively amongst words with Latin roots. Here is a table containing some examples of such adjectives:

It should be noted that stupor is not the exact noun form of stupid, which is actually stupidity. And, while stupidity and stupor convey different nuances, there is enough overlap in their definitions to make the noun-verb connection apparent. There are, however, just as many adjectives ending in –id with corresponding nouns that do not follow this trend. These nouns tend to end in the more common –idity and –ness. Here are some examples:

This second list acts as a reminder of just how irregular English conjugation is. A much more interesting list, however, includes adjectives ending in –id which, if run through the –id → –or conjugation mechanism, produce curious results. The following list includes the grammatically correct noun forms of these adjectives, as well as a list of nouns ending in –or which could possibly share some etymological relationship to the corresponding adjectives:

List 3: Adjectives with corresponding nouns that do not follow the -id --> -or pattern, but for which there exist other nouns, whether related to the initial adjectives or not, that do fall into the pattern

This is the list of most interest because, unlike the others, it does not merely consist of examples and counterexamples of the –id –> –or pattern. It presents us with an opportunity to explore the relationships between certain adjectives and the nouns that, coincidentally or not, are spelled and pronounced so as to fit the pattern established by the first list. Could the italicized nouns give us a glimpse into arcane uses of these adjectives? It requires no stretch of the imagination to see how the meaning of valid could be related to that of valor, the actions of a person possessing the Greek quality of valor being considered valid by the societal norms of the time. Similarly, it is easy to see how a relationship between liquid and liquor could exist somewhere in the recesses of word origins. But what of vapid → vapor and humid → humor?

While the word vapid is most commonly used as a synonym for insipid or lifeless, the Oxford English Dictionary reveals that, in the 17th century, vapid was used to mean, “Of a damp or steamy character; dank, vaporous.” Here, it seems, lies the –id → –or connection between vapid and vapor, an outdated relationship betrayed by the modern word forms in which it no doubt resulted. To unearth the connection between humid and humor also requires the pondering of a definition that has fallen out of use. The Oxford English Dictionary lists, among its definitions of humid, several relevant usages that haven’t been common for centuries. While the primary definition is, “Slightly wet as with steam, suspended vapor, or most; moist, damp.”, several historical uses of the word include, “In medieval physiology, said of elements, humours…Said of a chemical process in which liquid is used…Of diseases: Marked by a moist discharge.” This conception of humor (a reference to the Four Humours or Temperaments, medical notions that hearken back to ancient Egypt and Greece), here referring to wetness, gave rise to our modern notion of the word, currently used to mean, “Mood natural to one’s temperament…” and, “The faculty of perceiving what is ludicrous or amusing, or of expressing it in speech, writing, or other composition.” To uncover the relationship requires a look at classical medicine and its assumptions regarding the functioning of the human body.

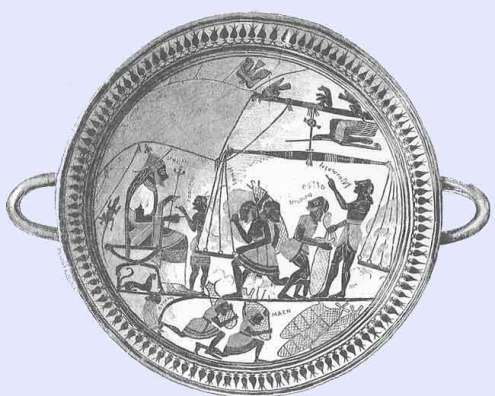

A chart outlining the relationship of the four humours (blood, yellow bile, black bile, and phlegm) with the physical body and its personality traits

According to this conception of human health, one whose composition is characterized by moistness walks the line between sanguine and phlegmatic. Those with sanguine temperaments were ascribed the traits of extroversion and sociability, while phlegmatics were considered kind and relaxed. It does not require a far leap to bridge the gap between the ancient notion of physiological humidity and our contemporary use of the word humorous to suggest a jovial and comedic personality. The following image shows how moisture was associated with a sanguine-phlegmatic temperament:

Having deciphered the aforementioned relationships through a bit of etymological archaeology, it seems necessary to address one final list of words. Here is a list of common nouns ending in –or with corresponding adjectives that seem to follow no discernable pattern:

List 4: Nouns ending in -or with corresponding adjectives that do not follow the -id --> -or pattern

While this last list does not present any immediately obvious clues regarding outdated word usage, it leads to another interesting line of inquiry. Why is there no adjective form of temblor in the English language, whether modern or arcane? According to the Oxford English Dictionary, the world temblor, synonymous with earthquake, is an Americanism not seen in print earlier than 1876. Since the word is so young and is not commonly used, perhaps it simply never had the chance to sprout related adjectives. Perchance this could lead to the temblorous conclusion that English word forms only emerge if they are necessary, and do not merely arise out of grammatical convention.

Some Further Reading:

An article about Greek virtue ethics

A look at the classical conception of the four bodily humours

An exhaustive list of –id adjectives that makes special note of vapor and humor. Oddly, this list suggests that valor and valid are unrelated, while glossing over the relationship between humor and humid

Ned Maddrell, the Last Native Speaker of Manx

Situated in the Irish Sea between Ireland and England, the Isle of Man is, according to its government’s website, “An internally self-governing dependent territory of the Crown which is not part of the United Kingdom.” Inhabited since the 7th millennium BC, the island has hosted a variety of cultures practicing diverse traditions.

Although the island’s official language is currently English, the native tongue was, until recent decades, the peculiar Goidelic language of Manx Gaelic (insular Celtic languages are split into two groups: Manx, Irish, and Scottish Gaelic are dubbed Goidelic, while Breton, Cornish, and Welsh are dubbed Brythonic. The two groups are widely believed to share a common precursor) . This historically significant era came to an end on December 27, 1974 when Ned Maddrell, the last native speaker of Manx, died at the age of ninety-seven.

Fortunately for the sake of cultural preservation, Maddrell allowed linguists to record him speaking Manx in the late 1940s when he was one of only two living native speakers. Upon the 1962 death of the other native speaker, Sage Kinvig, Maddrell became something of a celebrity in linguistic circles. Students of Goidelic languages flocked to him in order to learn what they could of the imperiled language before his death. Maddrell’s willingness to expound upon Manx proved invaluable to its preservation, even if only for academic purposes. Here is an example of spoken Manx, available to us due in no small part to Maddrell:

To put the demise of Manx in context, here is part of a speech by the Office of the Spokesperson for the Secretary-General of the United Nations from February of 2009:

Some further reading:

An article that discusses the origins of Manx as well as its last native speakers

Some information about the Isle of Man from its government’s official website

A look at insular Celtic languages that distinguishes between Goidelic and Brythonic

An article with links to many different endangered languages

The Giant and the Midget

In the wake of the Great Depression, President Franklin D. Roosevelt was desperate to garner public and political support for The New Deal, an ambitious program that, he hoped, would pump much needed stimulation into the United States’ stumbling economy. In 1933 the Senate Banking Committee held a series of hearings to investigate the financial practices of banking giant J.P. Morgan, Jr., who controlled the corporate behemoth founded by his father, and was also instrumental in establishing the Federal Reserve in 1913. Morgan’s high profile set the stage for his testimony to receive wide media coverage. This may have been the first event, in fact, to be dubbed a “media circus”.

John Pierpont Morgan, 1867-1943.

Coincidentally, the Ringling Brothers Circus was in Washington during the dates of Morgan’s testimony. Capitalizing on this peculiar opportunity, the circus’ press agent arranged for one of its performers, a 27-inch-tall midget named Lya Graf, to be present at the public hearings. When Graf was awkwardly introduced to Morgan, she hopped onto his lap and sat like a child. This allowed for a most interesting photograph to be taken. Depicting a physically deformed sideshow performer sitting on the lap of a man who epitomized wealth and corporate greed, this image captures in a whimsical yet biting way the disparities between the rich and the poor in the United States during the height of corporate capitalism in the early 20th Century. Simultaneously silly and grotesque, the contrast between Graf and Morgan visually expresses the vast inequalities that defined the economic state of America in 1933. It was an image that spoke volumes.

The contrast between Morgan and Graf, most strikingly that of their sizes and implicit power, acted as a real life political cartoon, simultaneously explaining and satirizing the inequalities that defined Depression Era America. The popularity of this photo improved Morgan’s public image and turned Graf into a media darling, if only briefly.

This image resonates particularly well in modern day America, in which class division and unchecked corporate accumulation is reminiscent of the Depression Era. Imagine how poignant a photograph of Warren Buffet cradling an illegal Mexican laborer would seem in light of the current social and economic climate.

Some Further Reading:

An excerpt from a book on the Morgan family that discusses this incident

An article focusing on Graf and her role in this photograph

A succinct introduction to Roosevelt, the Depression, and the New Deal

Thursday, the Day of Thor

Irrational Geographic is so often concerned with notions ancient and arcane that, in this novel entry, I’ve decided to take an opposite approach. Today is Thursday, the 6th of August. So as to remain as temporally present and as commonplace as possible, I have decided to make an inquiry into Thursday itself. One seventh of our shared existence is spent inside of this designated period of time, so its origins, both as an entity and as a word, are of undeniable interest.

An artistic representation of the months and seasons of the modern Gregorian calendar, here juxtaposed with the ancient Hebrew calendar.

Thursday is designated the fifth day of the week according to the Gregorian calendar, which is currently the Western standard for the temporal demarcation of the year (There are, of course, other calendrical systems currently in use, including the Jewish and Hindu calendars, and that of the Nigerian Igbo with their curious four-day week). This is only the case due to the fact that Sunday is widely designated as the week’s first day, an honor bestowed upon the day, named after the year-defining sun (from the Old English word Sunnendaeg, “Day of the sun”), by Judeo-Christian calendrical tradition. Some nations including The United Kingdom, on the other hand, still consider Sunday to be the week’s seventh day, making Thursday the fourth. The Chinese word for Thursday, in fact, means fourth. The ancient Greeks and Romans would have taken issue with this, however, each designating their equivalent of Sunday as the week’s first day, associating it with supreme divinity.

The legendary Colossus of Rhodes, one of the Seven Wonders of the Ancient World, depicted Helios, the Greek sun god, for whom the first day of the week was named.

Despite the fact that Thursday sits on the opposite side of the week from Sunday, its namesake is certainly a source of great historical power and significance. Its moniker originates in a culture very different than that of Sunday. Thursday takes its name from Thor, simultaneously the ancient Norse god of thunder and Germanic god of protection.

”Thor’s Battle Against the Giants” by Swedish painter Marten Eskil Winge, 1872.

One of the oldest recorded deities of Scandinavian polytheistic culture, Thor (also referred to as Donor in some Germanic linguistic traditions) served as a symbol of Pagan resistance and cultural pride in the face of the monotheistic Christian encroachment upon Scandenavia beginning in the 8th century. Perhaps the fact that remnants of Thor grace our modern calendars (Thursday having taken the place of Dies Iovis, the ancient Latin “Day of Jupiter”) suggests that this resistance was never fully quelled despite the fact that, by the 12th century, Christianity had all but beaten Paganism out of the region.

A Medieval map of Scandinavia.

Like the evergreen tree decorated with candles and ribbons displayed during the Christmas celebration, the prominent inclusion of Thor’s name in a predominantly Judeo-Christian calendar is an instance of the hybridization of Pagan and monotheistic traditions that has survived into modern times. While the Christian crusaders of the Middle Ages may have aimed to bend the world to their will, they themselves received some cultural battle scars that are still visible today. Our word for Thursday is just such a scar, scratched approximately fifty two times across the face of every modern Western calendar.

Some Further Reading:

A look at the Igbo calendar as it relates to the notion of a spiritual cosmic order

A simple breakdown of the Hindu calendar

A tool that allows for the conversion of dates between the Gregorian and Jewish calendars

An essay that discusses the origins of the Christmas tree and its Pagan connections

A Wikipedia entry including some excellent charts comparing day nomenclature cross-culturally

The Lost Panacea of Silphium

Native to the ancient Greek colony of Cyrene (located in modern day Libya), Silphium (also known as laser) is an extinct plant that, in its heyday, was one of the most treasured medicinal resources of the ancient world. Employed by cultures all around the Mediterranean, Silphium was used as a spice, a cure-all medicinal remedy, a form of birth control, and an agent for pregnancy abortion. Famed scholars ranging from Pliny the Elder to Herodotus to Theophrastus all wrote of Silphium’s legendary potency. Despite its widespread popularity, Silphium allegedly refused to grow anywhere aside from Cyrene. The colony became so closely identified with the plant that it appears on the settlement’s coins.

Native to the ancient Greek colony of Cyrene (located in modern day Libya), Silphium (also known as laser) is an extinct plant that, in its heyday, was one of the most treasured medicinal resources of the ancient world. Employed by cultures all around the Mediterranean, Silphium was used as a spice, a cure-all medicinal remedy, a form of birth control, and an agent for pregnancy abortion. Famed scholars ranging from Pliny the Elder to Herodotus to Theophrastus all wrote of Silphium’s legendary potency. Despite its widespread popularity, Silphium allegedly refused to grow anywhere aside from Cyrene. The colony became so closely identified with the plant that it appears on the settlement’s coins.

Silphium, here seen on Cyrene's coins, was the colony's chief export. The plant was notoriously resistant to cultivation, and is believed to have been harvested to extinction within the first few centuries AD.

Silphium was so strongly desired by various ancient civilizations that it was, at times, valued above currency. With some Romans contending that the plant was a gift from the god Apollo, its extinction was considered a great tragedy. Pliny even wrote that the last known Silphium plant was given to the Roman Emperor Nero himself.

An artifact from the 6th century believed to depict King Arcesilaus II of Cyrene overseeing the weighing of Silphium.

The Egyptians shared the Romans’ veneration of the plant, associating its with human love and sexuality. The Egyptian glyph signifying the heart portion of the soul, in fact, may have been meant to picture the seed of the Silphium plant. This character, known to the Egyptians as Ib, is likely the origin of our modern heart symbol.

Here is an ancient Cyrene coin bearing the image of a Silphium seed. Its likeness both to the Egyptian Ib and to the modern heart symbol is striking.

While the world has been without Silphium and its powers for well over a millennium, our modern culture still bears its mark. Every time a love-dazed youth carves a heart into a tree or inserts a whimsical, heart-shaped emoticon into an online conversation, the plant that once commanded a king’s ransom is winking at us from the ghostly recesses of the Earth’s past. Like the Dodo bird that gave us an insult implying stupidity or the dinosaur that inhabits every child’s imagination, Silphium’s potency is strong enough to overcome the silencing power of extinction itself.

Some Further Reading:

Some information about Silphium’s possible use as birth control and an abortifacient

An article entitled “Abortion in the Ancient and Premodern World”

An article about ancient methods of measurement, including brief mention of Silphium from Cyrene

An essay addressing the five parts of the Egyptian soul, including Ib

Victorian Postmortem Portraiture

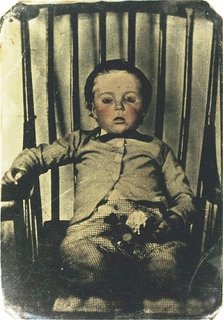

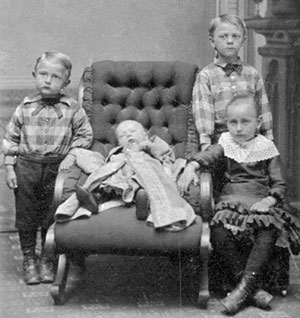

During the Victorian Era (1837 – 1901) in the United States and Europe, a peculiar funerary practice emerged. Due in part to the high youth mortality rates and in part to the recent popularization of daguerreotype photography, it became commonplace to have professional portraits taken of recently deceased loved ones, most commonly children.

These morbid images were often the only glimpses of the deceased that distant relatives ever had the chance to experience. Originally considered luxuries, such portraits became increasingly affordable as ambrotypes and tintypes were developed during the second half of the 19th century.

Since these photographs were meant to serve as memorials of the individual’s lives, the subjects were often formally dressed, fully made-up, and strapped upright in chairs to give them a semblance of vitality. This effect was sometimes enhanced by pink tinting later added to the photographs, making the lifeless faces appear flushed.

It was not uncommon for parents to pose with the corpses of their infants, or for children to surround the remains of their siblings.

Later examples of postmortem portraiture occasionally depict the deceased propped upright in coffins, simultaneously simulation life and acknowledging the lifelessness of the corpse.

While this tradition has all but vanished in modern day United States and most of Europe, various Orthodox communities in Eastern Europe still practice this form of commemoration, although focusing primarily on distinguished members of their religious communities. There are also some organizations in contemporary America that aim to revive this antiquated tradition. However, due to shifting norms and modern conceptions of death and bodies, photographs of corpses are perceived more as unseemly than as sentimental, evoking shutters of chilled terror in those who occasion to glimpse the open eyes of a lifeless face.

Some Further Reading:

The homepage of the Thanatos Archive, a collection of 19th century postmortem portraits

Earthquake Fish, Earthquake Weather, Earthquake Clouds, Earthquake Light

Appearing in ancient texts from many cultures across the globe, earthquakes have been a source of fear and speculation since time immemorial. With an average of 18 major temblors striking per year (mostly in the Pacific Ring of Fire), it is small wonder that this violent phenomenon has driven humans to desperate attempts at earthquake prediction. While few modern cultures accept, as some once did, that earthquakes are caused by celestial struggles or air trapped beneath the earth’s surface, many still point to early warning signs with origins in ancient mythology. The following earthquake prediction techniques are not supported by mainstream modern science, but are nonetheless widely embraced by individuals and organizations determined to gain a foothold against one of nature’s most destructive habits.

Earthquake Fish

More commonly known as the ribbonfish, these oddly shaped creatures dwell at great depths and commonly measure up to 8 feet long. Taiwanese legend points to these slender fish when attempting to predict earthquakes, claiming that these deep-sea dwellers rise to the surface in the moments before a quake strikes. Modern seismology has shown no correlation between the activities of these fish and actual earthquakes.

Earthquake Weather

Some claim that the Loma Prieta earthquake that ravaged the San Francisco Bay Area in 1989 was preceded by "earthquake weather". This photograph depicts the part of the Bay Bridge that collapsed during the temblor, which was broadcast on live TV due to the fact that the San Francisco Giants were playing in the World Series at the time.

Perhaps the most prevalent folk superstition regarding earthquakes in the modern day United States, the notion of earthquake weather in Western culture dates at least as far back as Herodotus (486 BC – 425 BC). Aristotle wrote about this meteorological phenomenon as well, attributing earthquakes to subterranean winds. Warm, calm weather, he believed, would precede seismic activity. While modern seismologists dismiss this notion as foolish and unfounded, I have personally witnessed this widespread superstition in action. On one unseasonably warm afternoon in San Francisco I was warned by multiple people to be wary, for we were experiencing, they claimed, typical earthquake weather. Fortunately, that day ended without disaster.

Earthquake Clouds

Discussed by Indian scholar Daivajna Varāhamihira as early as the 6th century, peculiar cloud formations are believed by some to rapidly appear in anticipation of earthquakes. Similar observations have appeared in Chinese and European writings of antiquity. These long, slender clouds that have been likened to snakes are said to form in a matter of seconds, acting as a grim premonition to observers below. Modern seismologists are divided about the legitimacy of this prediction technique, which does, at least superficially, seem to show some semblance of legitimacy. These clouds, it is hypothesized, correspond to temperature changes along fault lines that can accompany increased seismic activity and the eruption of heated gasses. The thermodynamic mechanisms by which terrestrial temperature changes affect cloud formation, however, have still yet to be demonstrated in a way that satisfies the scientific community. Until this can be successfully done, earthquake clouds will remain relegated to the realm of superstition.

Earthquake Light

This beautiful luminescence was spotted in the sky over Tianshui, Gansu province about 30 minutes before the Sichuan earthquake of May 12, 2008.

Similar in appearance to the polar aurorae (borealis and austrialis), earthquake light is said to include a wider range of colors. Having been embraced as a harbinger of earthquakes since ancient Greece, several 20th century earthquakes have, many claim, been preceded by these beautiful lights. Minutes before an earthquake struck the Sichuan province of China in 2008, cell phone video footage of these lights was uploaded to the website Youtube.com. Skeptics contend that these lights were merely the result of sunlight refracted by atmospheric moisture. Neuroscientist Michael Persinger has attempted to explain these mysterious lights through his Tectonic Strain Theory, which links seismic activity to electromagnetic fields that can be misinterpreted by human cognition as lights or even UFOs.

While each of these prediction techniques has its fervent proponents, evidence for their reliability is not sufficient enough for them to be employed by the United States Geological Survey Earthquake Hazards Program. This does little, however, to dissuade individuals from looking to ancient wisdom for comfort in the face of a violent force so overwhelmingly powerful that it effortlessly causes the world’s most developed nations to grovel before it in fear. This speaks to the common occurrence of drastic emotion overriding and even dashing to bits all the pristine knowledge of the academic ivory tower. In the face of violent death, sometimes there is only the terrified individual against an indifferent quagmire of external forces.

Some Further Reading:

A frequently updated site tracking earthquake clouds

The United States Geological Survey’s homepage for earthquake information

An article exploring many facets of earthquake clouds

A brief look at the Tectonic Strain Theory

The National Earthquake Information Center

An article proposing a scientific explanation for earthquake lights

A video depicted the earthquake lights that purportedly predicted the Sichuan quake of 2008

The Montauk Monster

I have hidden this entry from view due to the grotesque nature of some photographs contained within. Click the link below to see the full article.

The Peculiar Cant of Ciazarn

It is not uncommon for a specialized vocabulary to be spoken amongst members of a group united by profession. Sailors, soldiers, actors, and doctors all regularly speak words and phrases that are rarely, if ever, used by lay people. But only seldom throughout history does one find groups of people among whom nuanced and extensive systems of secretive slang, known as cants, have emerged. One of my favorite such bodies of slang is the cant, known as Ciazarn, spoken by American carnival workers (carnies), during the first half of the 20th century.

The word Ciazarn itself (pronounced KEY-uh-zarn) illustrates the mechanics of this cant. In order to convert a normal word into Ciazarn, extra syllables, usually consisting of i, a, and z sounds, are added into the middle of the word. Carny becomes key-uh-ZAR-nee, hence the name of the cant. Another example is the word gimmick which, in Ciazarn, is pronounced as gee-ya-ZIM-ick. The rules of this cant are simple enough, but when spoken rapidly it allowed carnies to openly converse with one another without being understood by the carnival patrons. When coupled with an extensive vocabulary of additional slang terms, this linguistic contortion allowed the carnies to easily collude in bilking rubes.

It should be noted that some contemporary hip-hop slang follows similar guidelines. In the early 21st century, rappers Snoop Dogg and Jay Z popularized the insertion of “izzle” into the middle of words. Perhaps the most well-known example of this is the phrase “fo shizzle”, which is a modified version of “for sure”. This is very close to the Ciazarn version of the phrase, which would have been pronounced “for SHE-uh-zor”. Another popular cant used in modern English is Pig Latin, which follows a distinct yet similarly simple set of rules for word alteration. Pig Latin differs in that it requires a rearrangement of the word’s vowels with an “ay” sound added on the end, “sure” being pronounced “uhr-shay”,

See-uh-zum Fee-uh-zurther Re-uh-zeading:

An article about Parlyaree, a cant spoken among members of the British gay subculture during the 1950s and 1960s (some of the phrases remind me of Anthony Burgess’ Nadsat, mentioned in a previous entry)

A compendium of Vaudeville slang that is certainly worth reading

A list of various cants, along with links to articles about them

The Pitfalls of Supernormal Stimuli

“…(supernormal stimulus) refers to a paradoxical effect whereby animals show greater responsiveness to stimuli that differ substantially from the “natural” stimulus.”

– J.E.R. Staddon, Behavioral Psychologist

I recently learned of the writings of Dutch ethologist Nikolaas Tinbergen (1907-1988), who performed some fascinating experiments on birds, specifically oystercatchers.

Shorebirds found all across the globe, oystercatchers possess a hard-wired trait that caught Tinbergen’s attention. After laying several eggs, female oystercatchers are faced with the choice of which egg to brood atop, and thus incubate the enclosed embryo until its hatching. Since larger eggs are more likely to produce healthy chicks, these birds are programmed to select their largest eggs for brooding. This tendency produces very odd results, however, when it is exposed to supernormal stimuli. In this case, Tinbergen placed the much larger eggs of a totally different species of bird along side the oystercatchers’ eggs. Surprisingly, the oystercatchers hopped right atop these absurdly large eggs, ignoring their own.

Here is former Food and Drug Administration Commissioner David A. Kessler’s explanation of this counterintuitive behavior:

“From the standpoint of evolution, a bird’s preference for a larger egg over a smaller one makes sense. Smaller eggs are more likely to be nonviable, so birds that consistently choose them would not have been likely to survive as a species. Their preference for a giant egg is a logical extension of a preference for the egg that seems most likely to be viable.”

The power of the supernormal stimulus of egg size to hijack the oystercatchers’ ingrained survival mechanisms is strong enough to cause these birds to cuckold themselves, expending precious time and energy to bring another bird’s child into the world. While this effect is curious when observed among birds, its results are more startling when applied to humans. An oft-cited example of this is the contentious issue of the portrayal and attractiveness of female bodies. For example, throughout history many women have had their bodies altered to make their breast bigger or appear to be bigger, whether through body-molding clothing or, more recently, surgery and photo editing.

Venus of Willendorf is a limestone carving of a woman’s body discovered in Lower Austria. It is believed to date from 24,000 BCE – 22,000 BCE. Her breasts are swollen to absurd proportions.

The Venus of Willendorf carving shows that the issue of male attraction to exaggerated, unnatural female forms is nothing new. University of Cambridge archaeologist Paul Mellars observes that, “…(Venus of Willendorf) could be seen as bordering on the pornographic.” This is an example of how male attraction to breasts, although having originally coevolved with actual female breasts, can be triggered by portrayals of exaggerated and unnatural breasts.

Another illustration of how supernormal stimuli can kidnap ingrained human desires and redirect them toward artificial ends can be found on the menu of any fast food restaurant.

This chart compares the calories and fat contained in hamburgers served at some leading fast food chains

When competing for survival, pre-agricultural humans benefited greatly from eating as much animal fat as they could get their hands on due to its rich caloric content. As a result, the human tongue and brain grew to find fat quite palatable. In modern society it is much easier to gain access to fatty foods, but the tongue and brain can still be motivated by a primal desire to eat as much fat as possible, as if stockpiling calories in the face of potential periods of famine. In their book Understanding Nutrition, nutritionists Elli Whitney and Sharon Randy Rolfes claim that a healthy adult should consume 45 to 75 grams of fat each day. Many of the hamburgers on the above chart, however, exceed this daily amount despite the fact that they are meant to comprise just one meal (foods containing wildly inflated amounts of fat, salt, sugar, and so on are dubbed “hyperpalatable” by food scientists).

The overwhelming popularity of these restaurants (McDonald’s having famously served “billions and billions”) demonstrates how a human drive that originally aided survival now leads many to consume significantly more fat than their bodies require, potentially to the detriment of their health. These chain restaurants, however, are not fully to blame for selling such hyperpalatable food. As W. Philip T. James of the International Obesity Task Force points out, “It is little wonder that food manufacturers, responding to taste panels and sales returns, have focused, particularly in the last two decades, on providing this evolutionarily rare but highly prized sensory mix as a routine in an increasingly varied number of foods made for convenient consumption.”

There are many more examples of supernormal stimuli leading people to self-destructive behavior, ranging from drug addiction (certain drugs, such as cocaine, stimulate a massive release of dopamine in the brain, whereas dopamine is released in much smaller quantities under natural circumstances) to video game enthusiasts literally starving to death after playing World of Warcraft for days on end without interruption. The conclusion to be drawn is that, in order to enjoy the spoils of modern life healthfully in the face of ubiquitous supernormal stimuli, a certain resistance to one’s own chemical drives is neccessary. This is no easy task, however, since the brain is literally built to perceive the satisfaction of these desires as necessary for survival.

Some Further Reading:

A very interesting article about how supernormal stimulus is used in product marketing